Artificial intelligence systems powered by large language models are now embedded in everyday tools—search engines, chatbots, writing assistants, and enterprise automation platforms. Yet the conversation around AI often focuses only on what these models can do, not where they struggle.

Understanding LLM limitations and hallucinations is critical for organizations that rely on AI to produce content, automate support, or analyse information. Even the most advanced models can generate incorrect facts, fabricate sources, or misinterpret context.

For companies exploring AI adoption in Toronto, this issue is more than theoretical. When a model produces inaccurate information in customer-facing environments, the consequences can affect trust, compliance, and brand credibility.

To work responsibly with AI, businesses must understand why these limitations occur and how they can be managed.

What Are LLM Hallucinations?

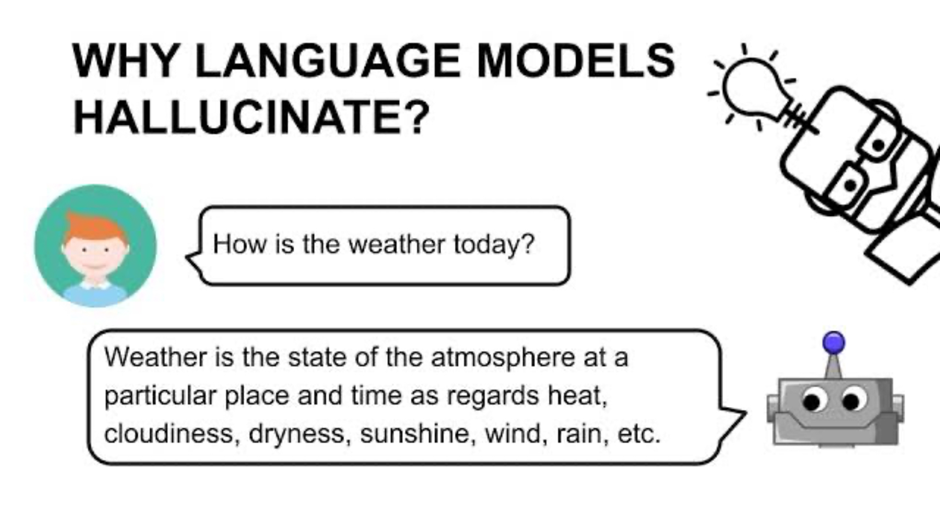

To work with LLM limitations, it’s important to define hallucinations. A hallucination is when a large language model generates plausible information that is not true or even made up. The model isn’t “lying.” It’s just outputting the most probable words based on what it has learned.

This is why hallucinated answers often appear confident and detailed. The model has learned patterns of language, not verified knowledge.

Some Common hallucination examples have been shared below :

• Fabricated academic citations

• Incorrect statistics

• Imaginary product features

• Wrong historical facts

• Misinterpreted technical explanations

These errors become especially noticeable when users rely on AI-generated content for decision-making or research.

Why Large Language Models Hallucinate

Many people assume hallucinations occur because the AI system is broken or poorly trained. In reality, hallucinations are a natural consequence of how large language models operate.

These models work by predicting the next word in a sequence. They do not “know” information in the same way a human expert does. Instead, they identify statistical relationships in enormous datasets.

Several factors contribute to hallucinations:

Predictive nature of language models

An LLM predicts likely words rather than verifying truth. If a question resembles patterns from its training data, it will generate a response—even when certainty is low.

Incomplete training data

No dataset contains every fact. When information is missing, the model fills the gap with patterns that resemble existing knowledge.

Ambiguous prompts

When a question lacks context, the model may interpret it incorrectly and generate a confident but wrong response.

Over-generalization

If a model learns a rule that works in many situations, it may apply that rule even when it should not.

For companies adopting enterprise AI solutions in Hamilton, these factors highlight why human review remains essential.

7 Real Limitations of Large Language Models

LLMs are powerful tools, but they have boundaries. Understanding those boundaries prevents unrealistic expectations.

Below are several limitations that appear consistently across AI systems.

1. Lack of Real Understanding

Despite impressive output, LLMs do not truly understand language. They recognise patterns.

This difference becomes clear when the model encounters complex reasoning or unfamiliar scenarios. The system can generate a convincing explanation while misunderstanding the underlying concept.

For businesses experimenting with AI automation in Ontario, this limitation often appears when models handle nuanced customer questions.

2. Fabricated References and Citations

Academic users frequently notice this issue first. When asked for references, a model may generate realistic-looking journal articles that do not exist.

The titles appear credible. Author names may even resemble real researchers.

However, the sources are invented.

This happens because the model has learned how citations are structured but cannot verify whether a specific paper actually exists.

3. Weakness in Numerical Accuracy

Large language models are not designed for complex mathematics or financial calculations.

While simple arithmetic often works, multi-step calculations can produce inconsistent results.

In many workflows, combining AI language models with deterministic systems such as calculators or databases produces more reliable outcomes.

4. Outdated Knowledge

Most LLMs are trained on data collected during a specific time period. Unless connected to real-time information sources, their knowledge eventually becomes outdated.

For example, policy changes, market data, or product updates may not appear in the model’s responses.

Companies using AI tools for digital marketing in Toronto sometimes notice this when the system references outdated search algorithms or platform features.

5. Sensitivity to Prompt Wording

Small changes in a prompt can produce dramatically different responses.

A vague question may generate speculation, while a structured prompt produces a clear answer.

This behaviour has led to the rise of prompt engineering, where users design prompts carefully to guide the model’s reasoning.

6. Context Window Constraints

Language models have a limit to how much information they can process at once. This is known as the context window.

When conversations become long, earlier information may drop out of memory. The model might then repeat questions or contradict previous statements.

For customer support chatbots built with AI conversational systems in Hamilton, managing context effectively becomes important.

7. Overconfidence in Uncertain Answers

One of the most challenging aspects of AI output is confidence.

LLMs often deliver responses with the same tone regardless of certainty. A guess may appear as confident as a verified fact.

Without external validation, users may assume the information is accurate.

This is why companies deploying AI knowledge assistants in Ontario frequently combine them with curated internal databases.

How Businesses Can Reduce LLM Hallucinations

While hallucinations can’t be completely prevented, there are ways to minimise their impact.

Companies that use AI at work tend to have a multi-faceted approach.

1. Retrieval-augmented generation: This method links the language model to a trusted source of information. Rather than generating responses from the training data, the model consults trusted sources and then generates responses from that.

2. Structured Prompts: Clear prompts improve accuracy. Context, examples, or restrictions help to keep the model in check.

3. Human Review Systems: Human review is still needed for high-stakes uses, such as legal documents, financial reports or technical reports.

4. Model Fine-Tuning: Some businesses fine-tune models using their own data. This fine-tunes the responses in line with corporate knowledge.

How Marketers Should Approach LLM Limitations

Nowadays, teams produce content and conduct research using AI. Hallucinations can subtly add errors to content. Google is improving how it identifies unreliable information. Reliability signals and rankings can be influenced by inaccuracies presenting multiple times.

Content production teams using AI-supported SEO in Toronto create authoring workflows with steps to review and fact check.

Likewise, marketing agencies that offer AI content optimisation services in Hamilton prioritise human oversight of AI processes.

This approach is generally most effective.

The Future of AI Reliability

AI systems are improving rapidly. Emerging model designs hallucinate less and reason better.

There are also experiments with :

Hybrid symbolic-neural models

These approaches attempt to merge the statistical language model with knowledge.

Firms that are investing in AI adoption in Ontario are monitoring these developments with interest as dependability will decide the extent to which AI can be trusted in determining critical functions.

Final Thoughts

Large language models are a new milestone in the evolution of human interactions with computers. They generate text, articles, summaries, explanations, translations and more in no time.

But they have limitations and hallucinations.

Knowing LLM limitations and hallucinations means companies must be cautious with AI. By pairing these with human checks, consistent data and processes, they become much more reliable.

Businesses that use AI as a guide, rather than an oracle, typically get the most benefit from the technology.

FAQs

What are LLM hallucinations?

LLM hallucinations occur when a large language model generates information that sounds convincing but is incorrect or fabricated. The model predicts language patterns rather than verifying facts.

Why do AI language models hallucinate?

Hallucinations happen because AI language models rely on statistical predictions. If the model lacks reliable information about a topic, it may generate a plausible answer instead of admitting uncertainty.

Can hallucinations in AI be prevented?

Hallucinations cannot be removed entirely, but techniques like retrieval-augmented generation, better prompts, and human review significantly reduce them.

Are LLM hallucinations dangerous for businesses?

They might be to some extent. If an AI systems provide inaccurate information in customer support, legal documentation, or financial reports, the errors may affect credibility or compliance.

How can companies use AI safely?

Businesses often combine AI language models with verified databases, internal knowledge systems, and human oversight to ensure accuracy.