When we are evaluating LLMs vs Traditional AI Models, most of the business leaders assume they are just two versions of the same technology , but in reality they are not. The architectural differences, training methods, scalability limits and cost implications are fundamentally different.

I’ve seen companies invest in the wrong AI stack simply because “AI” sounded like one bucket. It isn’t. If you’re running operations, marketing, SaaS, analytics, or automation projects, understanding the difference can save months of misaligned implementation.

This guide breaks down the technical distinctions, practical implications, and business use cases — without hype.

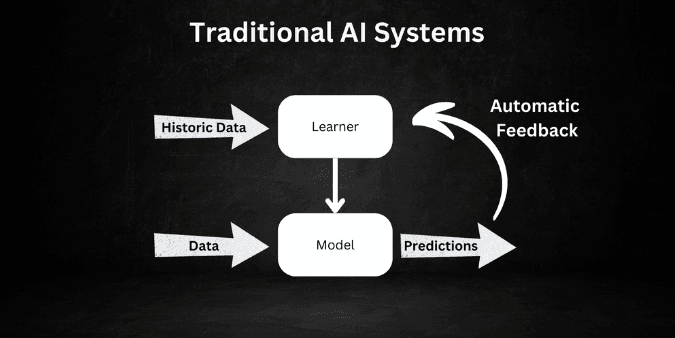

What Are Traditional AI Models?

Before Large Language Models (LLMs) became mainstream, most AI systems were rule-driven or trained on narrow datasets.

Traditional AI models typically include:

- Machine Learning models

- Decision Trees

- Support Vector Machines

- Random Forest algorithms

- Linear Regression models

- Rule-based automation systems

These models are designed for specific tasks. Fraud detection. Demand forecasting. Email classification. Inventory optimization.

They perform extremely well — but within clearly defined boundaries.

For example:

- A retail forecasting model predicts next month’s demand.

- A credit scoring model evaluates loan eligibility.

- A recommendation engine suggests products.

Each system is trained for one objective.

That focus is both their strength and their limitation.

What Are LLMs?

Large Language Models (LLMs) are the deep neural networks trained on massive text datasets. Unlike traditional systems, they are pre-trained on broad knowledge and then adapted for multiple tasks.

Popular examples include:

These models are built using transformer architecture, enabling them to:

- Generate human-like text

- Understand the context across long detailed documents

- Performing reasoning tasks

- Write code

- Summarize reports

- Answer open-ended queries

Unlike traditional AI models, LLMs are general-purpose systems.

Core Differences: LLMs vs Traditional AI Models

Let’s break this down practically.

1. Architecture

Traditional AI:

- Built using statistical or shallow machine learning models

- Designed for structured datasets

- Limited contextual understanding

LLMs:

- Based on deep neural networks

- Trained on billions of parameters

- Understand semantic relationships and context

A traditional fraud detection system analyzes predefined risk variables. An LLM can analyze the complaint email, the transaction history summary, and customer tone — simultaneously.

That’s a major difference.

2. Training Approach

Traditional AI training approach is as follows :

- Trained on specific labeled datasets

- Requires clean, structured data

- Retraining needed for new tasks

LLMs:

- Pre-trained on massive unstructured datasets

- Fine-tuned using smaller datasets

- Can perform zero-shot or few-shot learning

This flexibility reduces development time significantly.

3. Use Case Breadth

Traditional AI excels on the following points :

- Demand forecasting

- Supply chain optimization

- Risk modeling

- Predictive analytics

- Classification problems

LLMs excel at:

- Conversational AI

- Knowledge retrieval

- Content automation

- Code assistance

- Long-form document analysis

The real shift is in cognitive flexibility.

4. Data Requirements

Traditional AI requires:

- Clean tabular data

- Feature engineering

- Domain specific pre-processing

LLMs:

- Handle unstructured data

- Work with documents, PDFs, chats, transcripts

- Require prompt engineering instead of heavy feature engineering

Businesses dealing with large knowledge bases often prefer LLM-based systems.

For example, enterprises building AI knowledge assistants in Toronto have increasingly lean itself toward the LLM-powered retrieval systems instead of traditional keyword search models.

5. Explainability

Traditional models are easier to interpret:

- Feature importance analysis

- Clear mathematical relationships

- More transparent decision paths

LLMs explainability power :

- Operate as black-box systems

- Harder to fully explain the outputs

- Basically rely on the probabilistic token predictions.

If regulatory compliance is critical (like finance or healthcare), this matters.

6. Cost Structure

Traditional AI:

- Lower infrastructure cost

- More predictable computation requirements

- One-time development focus

LLMs:

- Higher token-based inference cost

- API usage fees

- Infrastructure for the vector databases and its embeddings

- Continuous optimizations are required.

In mid-sized enterprise deployments in Hamilton, teams often underestimate long-term LLM API consumption costs.

Budget modeling is essential.

7. Scalability and Integration

Traditional AI:

- Harder to repurpose

- Separate model per use case

LLMs:

- Single model can power multiple workflows

- its has a easier API based integration system

- Faster deployment cycles

This makes LLMs attractive for SaaS companies building multi-functional AI features.

When Should You Choose Traditional AI Models?

Choose traditional AI if:

- Your dataset is structured and historical

- You need explainability

- The task is repetitive and narrow

- You want lower ongoing cost

- Accuracy on a defined metric is critical

Example such as :

A manufacturing company optimizing predictive maintenance across facilities in Ontario may rely on traditional time-series forecasting models rather than LLMs.

Because structured sensor data doesn’t require generative reasoning.

When Should You Choose LLMs?

Choose LLMs if:

- You deal with documents, chats, or emails

- You need conversational interfaces

- You want knowledge automation

- You are in the need of cross-domain flexibility

- You want a very rapid deployment

Customer support automation, AI copilots, and enterprise search systems benefit heavily from LLM infrastructure.

Hybrid Approach: The Real-World Strategy

In practice, most serious deployments combine both.

Example architecture:

- Traditional AI model predicts churn risk.

- LLM generates personalized retention email.

- Vector database can stores knowledge embeddings in it.

- Rule-based system act as an enforcer in compliance guardrails.

That hybrid stack delivers better ROI than choosing one side blindly.

Performance Considerations

Accuracy metrics differ:

Traditional AI:

- Precision

- Recall

- F1 Score

- RMSE

- ROC-AUC

LLMs:

- Hallucination rate

- Context retention

- Token latency

- Response consistency

- Retrieval accuracy (RAG systems)

Performance benchmarking should align with the business goals.

Security and Data Privacy

Traditional AI:

- usually hosted internally

- Have a full data control.

LLMs:

- Often API-based

- Requires vendor evaluation

- Data retention policies matter

Enterprises implementing AI must review:

- Data encryption

- Model hosting environment

- Fine-tuning control

- Compliance alignment

Long-Term Business Impact

Traditional AI is mainly used to improve the processes and to make operations more efficient. LLMs, on the other hand, support work that involves thinking, writing, and decision-making.

Because of this difference the companies often needs to adjust how teams are structured and how responsibilities are divided.

Operations teams have usually been benefited more from predictive AI systems that help with forecasting and performance tracking.

Marketing, HR, support, and product teams benefit from LLM capabilities.

This shift is why enterprises are restructuring AI budgets toward generative systems while still maintaining classical ML for analytics.

SEO-Relevant Key Terms Covered

Throughout this article, we’ve addressed:

- LLMs vs Traditional AI Models

- Large Language Models

- Machine Learning models

- Transformer architecture

- Generative AI

- Predictive analytics

- AI cost comparison

- Enterprise AI implementation

- AI model scalability

- AI infrastructure decisions

Final Thoughts

The debate around LLMs vs Traditional AI Models should not be framed as replacement.

Traditional AI solves the structured prediction problems with a outstanding precision. LLMs handle language, context, and reasoning at scale.

Businesses that understand where each belongs build smarter systems — and avoid expensive missteps.

If your main pillar article covers broad Large Language Models, this supporting piece clarifies decision-making criteria and captures comparison-based search intent — which is strong for SEO in 2026.

What is the main difference between LLMs and traditional AI models?

The main difference is that LLMs vs Traditional AI Models differ in scope and flexibility. Traditional models are task-specific and structured-data driven, while LLMs are general-purpose models trained on large unstructured datasets and capable of handling multiple language-based tasks.

Are LLMs more accurate than traditional AI models?

Not necessarily. Traditional AI models can often outperform LLMs in narrow, well-defined predictive tasks. LLMs perform better in contextual understanding and language generation.

Which is more cost-effective: LLMs or traditional AI?

Traditional AI models typically have lower ongoing inference costs. LLMs can become expensive due to token-based pricing and infrastructure requirements.

Can businesses combine LLMs and traditional AI?

Yes it can . A hybrid approach using a predictive AI models alongside Generative AI systems often delivers better results.

Do LLMs replace machine learning models?

No. Machine Learning models remain essential for forecasting, anomaly detection, and numerical prediction tasks. LLMs extend capabilities into language-based applications.