SEO for LLMs is not an experimental concept anymore. It is a necessary shift in how we approach visibility online. Traditional ranking tactics were designed for search engines that displayed ten blue links. AI search systems now interpret, summarise, and recommend information before users even click.

That shift changes how content must be written, structured, and distributed.

If your website is still optimised only for classic search engine optimisation, you may rank on Google — but remain invisible inside AI-generated responses. That’s the gap businesses are beginning to notice.

This guide breaks down how AI search optimisation, Answer Engine Optimisation, and structured authority building work together, especially for companies targeting Canadian markets.

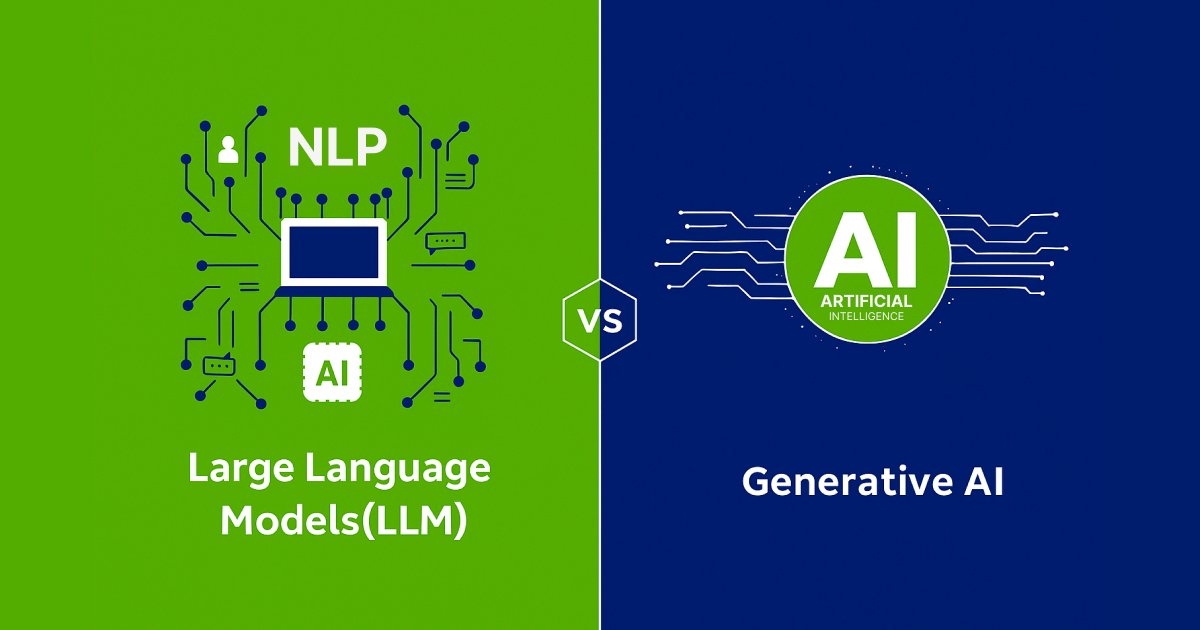

Why SEO for LLMs Is Different From Traditional SEO

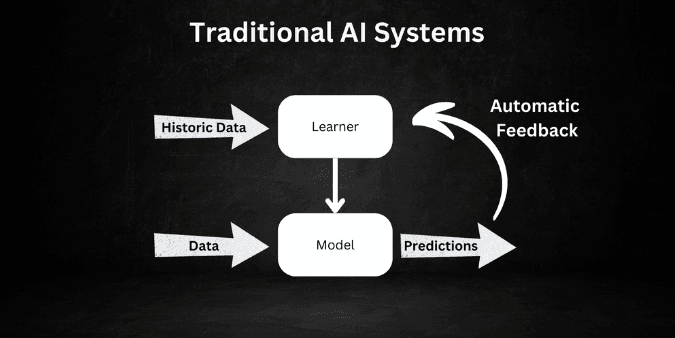

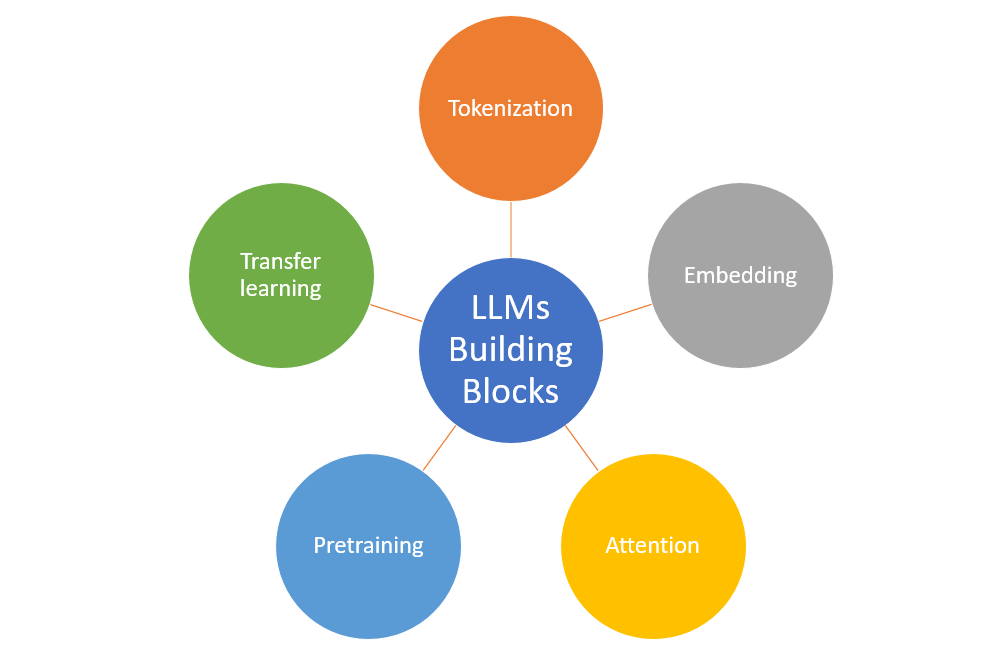

Traditional SEO mostly focused on keywords, backlinks, and technical signals. While those still matter, large language models evaluate the content differently in it own way .

They assess:

- Contextual depth

- Clarity of explanation

- Authority signals

- Structured formatting

- Entity relationships

An LLM does not “rank” content the same way Google does. Instead, it analyses patterns across its training data and retrieval sources to determine which content is reliable enough to summarise.

This is where AI SEO strategy begins to differ from conventional optimisation.

You are no longer trying only to rank a page. You are trying to become a reference.

Understanding How AI Search Engines Select Content

AI-driven platforms interpret the user queries in a very conversational way. Instead of matching the keywords exactly, they evaluate intent and the context.

For example, when someone searches:

“Who provides AI search optimisation services near me?”

The system does not simply list websites with that phrase. It attempts to extract clear answers from structured content that demonstrate topical authority.

If your content is vague or overly promotional, it will not be referenced.

Businesses offering AI SEO services in Toronto often assume adding location keywords is enough. It isn’t. AI systems need contextual depth explaining:

- What the service involves

- How it works

- Who it helps

- Why it is credible

Without those layers, you won’t appear in AI-generated summaries.

The Real Meaning of Answer Engine Optimisation (AEO)

Answer Engine Optimisation is about formatting your content so AI systems can directly extract answers from it.

This requires more than adding FAQs at the bottom of a page. It involves writing clearly structured sections where each heading is followed by a concise explanation.

For instance, instead of writing a very long paragraph and explaining the concept of thr shared information indirectly, you should define it in the first two sentences and then expand it eventually .

AI tools scan for definitional clarity. They prefer content that:

- States what something is immediately

- Explains how it works

- Provides context or examples

- Avoids unnecessary filler

When implemented correctly, AEO strategy increases your chances of appearing in AI summaries, featured snippets, and voice assistant responses.

How AI Optimisation (AIO) Builds Long-Term Authority

AI Optimisation is not about quick ranking wins. It is about building consistent authority signals across your domain and external ecosystem.

From experience, AI systems favour brands that:

- Publish multiple in-depth resources on related topics

- Maintain consistent terminology

- Build structured internal linking

- Receive relevant mentions across authoritative platforms

If you write one blog about LLM optimisation strategy and nothing else connected to it, AI will not treat you as an authority. But if you create a structured cluster around:

- AI content indexing

- voice search SEO

- entity-based SEO

- structured data SEO

- AI-driven search optimisation

You create contextual reinforcement.

This layered approach signals expertise.

Structuring Content So AI Can Interpret It Correctly

One mistake I frequently see is long-form content without structural discipline. Walls of text may look detailed but are difficult for machines to interpret.

Content designed for the AI search optimisation should follow a very logical flow as follows :

- Firstly, defining the concept clearly.

- Second, explain why it matters.

- Third, describe implementation.

- Fourth, provide examples or scenarios.

- Finally, address common questions.

This format mirrors how AI systems parse and summarise information.

When working with companies targeting AI search optimisation services in Hamilton, restructuring content alone significantly improved their visibility in AI summaries — even before backlink growth.

Structure matters more than people think.

The Role of Semantic SEO and Entity Relationships

Repeating a keyword ten times no longer strengthens content. In fact, it reduces credibility.

AI systems understand the topic relationships through a semantic signals. That means instead of repeating one phrase, your content should naturally include related concepts.

For example, a strong page on SEO for LLMs may include terms like:

- AI content strategy

- semantic SEO

- schema markup for AI

- voice search optimisation

- machine-readable content

These terms reinforce the context without forcing any sort of repetition.

AI evaluates relationships between concepts, not just frequency.

Voice Search and Conversational Queries

Voice queries are longer and more conversational than typed searches. Optimising for voice search SEO means anticipating how people speak.

Someone may ask:

“Who offers reliable LLM optimisation for my business?”

“What is the best way to optimise my website for the AI search?”

Your content should mirror natural phrasing and provide direct answers.

Avoid robotic transitions. Write as if you are explaining something clearly to a client sitting across the table.

When done correctly, conversational formatting increases visibility in both AI assistants and traditional search.

Technical Foundations That Support AI Visibility

Even the best content usually fails without a proper technical infrastructure. For effective AI-driven search optimisation, your website must have following things :

- Loading quickly across all the devices.

- Maintain a clean URL structure.

- Avoid duplicate content issues.

- Use canonical tags correctly.

- Implement structured schema markup.

Structured data such as FAQ schema and the Article schema helps machines to interpret your content confidently , hence technical clarity builds machine trust.

Building Authority Through Content Depth

Surface-level articles rarely get referenced. AI systems prefer content that demonstrates layered understanding.

Depth does not mean writing filler. It means covering :

- Definitions

- Use cases with practical examples

- Implementation steps with easy explanations

- Challenges faced

- Real-world observations in detailed manner

For example, businesses offering AI SEO services in Ontario should publish case studies that show:

- Problem

- Strategy

- Implementation

- Outcome

Specificity builds credibility.

Common Mistakes in SEO for LLMs

One frequent mistake is to treat AI search like a new keyword opportunity rather than a structural shift. Another issue is the publishing of thin blogs and then targeting high-volume terms without any topical depth in the content.

Some companies add FAQs randomly without aligning them to the actual user intent. And many ignore schema completely. Ractifying these issues often produces a very measurable improvements within months but not overnight, but steadily.

Measuring Success in AI Search

Traditional metrics still matter: rankings, traffic, and conversions.

But for AI SEO strategy, additional signals are important:

- AI-generated brand mentions

- Inclusion in featured snippets

- Increased branded search queries

- Knowledge panel improvements

AI visibility for a website is subtle at the begnning but compounds along with time.

Closing Perspective

The shift toward AI search is not about abandoning traditional SEO. It is about refining it.

The brands that win in this space are not chasing keywords blindly. They are building structured authority, publishing clear explanations, and reinforcing expertise across interconnected topics.

SEO for LLMs rewards clarity, depth, and discipline.

And unlike short-term ranking tactics, this approach compounds over time.

Frequently Asked Questions

What is SEO for LLMs?

SEO for LLMs is the process of structuring and optimising content so large language models can interpret, summarise, and recommend your information in AI-generated responses.

How does AI search optimisation work?

AI search optimisation focuses on semantic clarity, structured answers, authority signals, and machine-readable formatting rather than just keyword rankings.

What is the key difference between AEO and traditional SEO?

Answer Engine Optimisation prioritises providing direct, extractable answers for AI systems, while traditional SEO focuses more on ranking webpages in search results.

Does schema markup improve AI visibility?

Yes. Implementing schema markup for AI improves content interpretation and it also increases the chnaces of being referenced in an AI summaries.

How important is voice search SEO?

Voice search SEO is now increasingly important because the conversational queries are now growing across smart assistants and AI platforms.

Can local businesses rank in AI-generated answers?

Yes. With structured content and a strong local AI SEO strategy, regional businesses can appear in AI-driven responses.